Are you an entrepreneur looking to stay ahead of the curve and take your business to the next level?

OpenAI's GPT-4 is the newest and most powerful language model yet, with the ability to understand and generate human-like language at an unprecedented level. And it doesn't stop there - this model is also able to process images, making it a game-changer for those looking to integrate cutting-edge AI technology into their business.

If you're curious about how GPT-4 can benefit your business and take your online presence to the next level, keep reading!

What is GPT-4?

GPT-4 is the newest, largest, and most powerful language model developed by OpenAI. Released in March 2023, it is the fourth iteration of the GPT language model, designed to understand and generate human-like language at an unprecedented level. While OpenAI has not disclosed the exact size of the model, experts speculate that it might have more than 1 trillion parameters.

This powerful multimodal technology is capable of processing images, analyzing data, and interpreting results with unparalleled accuracy. It can create all sorts of writing for you, from songs to blog posts, and it can even understand your unique writing style. This model is also able to analyze images and describe them in detail, making it perfect for reading through documents or interpreting pictures.

Overall, GPT-4 represents a major milestone in the field of language models. Its steerability, or the ability to control the model's output, makes it an incredibly valuable tool for entrepreneurs looking to incorporate cutting-edge AI technology into their business.

How is GPT-4 different from GPT-3 and GPT-3.5-turbo?

OpenAI has made some big changes to their deep learning system for GPT-4. They're working with Azure to design a new supercomputer to handle the heavy workload. On top of that, they've addressed the bugs and shortcomings of the previous version, GPT-3.5. All of these improvements add up to a more stable and predictable training process for the model. OpenAI is also refining their methodology so they can predict and prepare for future capabilities in advance, which is an important step to ensure safety as they scale their technology.

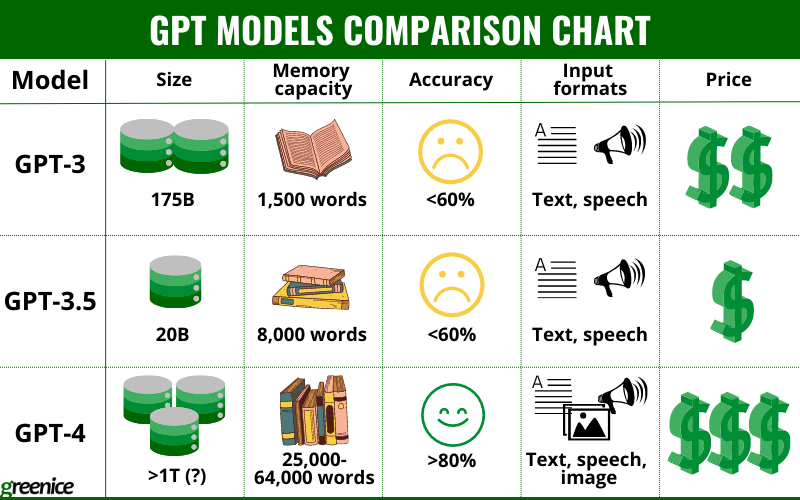

The most noticeable difference is the size of GPT models. While GPT-3 has 175 billion parameters, GPT-3.5 might have 20 billion, GPT-4 is rumored to have over 1 trillion parameters.

To make GPT-4 even smarter than its predecessors, OpenAI used reinforcement learning through human feedback to improve the output of the model. This iteration is more sensitive to the tone of voice, understands memes, and passes most of the exams with high scores. It is also much more capable in processing programming instructions and outperforms other models in English and other languages.

When it comes to GPT models, it's been said that they're still living in 2021, not yet aware of the latest concepts. However, GPT-4 is showing promise with recent events, e.g. it knows about the war in Ukraine. It's believed that this has become possible thanks to incorporating new data from prompts and learning from user feedback.

The accuracy of this model surpasses its predecessors, as it boasts a 40% improvement in providing factual information compared to GPT-3.5, resulting in an impressive accuracy rate of over 80%. For GPT-3 and GPT-3.5 accuracy rate is 60% at maximum. Additionally, GPT-4 exhibits responsible behavior by declining disallowed requests in compliance with OpenAI policies, with a likelihood of rejection of 82%.

Moreover, its memory capacity is approximately from 25,000 to 64,000 words depending on the model (the latest Turbo version can process up to 300 pages of text), which enhances its proficiency, especially when coupled with its capacity to extract information from web pages through provided links. To compare, for GPT-3.5 this capacity is limited to 8,000 words, for GPT-3 - to 1,500 words.

GPT-4 is able to perceive images and describe what it sees, unlike its predecessors which can process only text and speech. It is also the most expensive model of the family, due to its size and capabilities.

To sum up, GPT-4 still has some flaws. But unlike GPT-3 and 3.5 it is a steerable multimodal model with higher accuracy and security. If you want to learn more about other GPT products, we described them in detail in these articles:

- ChatGPT vs GPT-4 vs GPT-3: Comparison chart for business owners

- How to use GPT-3 by OpenAI for SaaS, Websites, or Apps in 2024

What are the main disadvantages of GPT-4?

While GPT-4 has new and impressive capabilities, it still poses similar risks as previous models, such as generating inaccurate information, buggy code, or harmful advice. Here are the main concerns and limitations:

Data safety

Recently, entrepreneurs, governments, and schools are concerned about the whole field of LLMs and AI. They are worried about the possible harmful impact of these technologies on national safety and education. Seems that many countries are suspicious about how OpenAI can use people’s personal data.

Dependency on prompting

Prompting is a blessing and a curse of these models. Its high sensitivity to any tweaks in prompts is able to give you the best or the worst answer. The model also doesn’t understand subtle meanings and typos. For example, if asked about -gate suffix it will only provide “exit” meaning and not scandal-related events.

Hallucinations

Despite a high rate of factual answers it still might hallucinate and respond with nonsense. This is a common flaw of all GPT models.

High stake areas

Due to slightly outdated information, dependency on a good prompt from users, and tendency to write nonsense from time to time GPT-4 can’t be left without supervision. It is especially true for fields where misinformation may lead to horrific consequences. For example, healthcare, finance, legal and other high-stakes areas.

New possible risks

The additional abilities of the model might cause additional risks e.g. AI alignment, biorisk, international security, cybersecurity, trust and safety.

To help reduce the risks of using the model, OpenAI brought in more than 50 experts to test and evaluate the model's behavior in high-risk areas. The data and feedback collected from these experts contributed to improvements and mitigations for GPT-4, including the addition of a safety reward signal during RLHF training to help minimize harmful outputs. It's clear that OpenAI is taking proactive steps to ensure the safety and reliability of this powerful technology.

As GPT-4 continues to gain traction, concerns about the safety of using the technology remain. However, the team behind the model has taken proactive steps to address these concerns, including the addition of a safety reward signal during RLHF (reinforcement learning from human feedback) training to help minimize harmful outputs. OpenAI's research has shown that tendency to respond to disallowed content has been reduced by 82% compared to GPT-3.5, and it responds to sensitive requests in accordance with policies 29% more often.

Here is what Sam Altman, OpenAI CEO, says about their technology: "We've got to be cautious here, and also, it doesn't work to do all of this in a lab. You've got to get all of these products out into the world and make contact with reality, make our mistakes while the stakes are low. All of that said, I think people should be happy that we're a little bit scared of this."

All in all, GPT-4 is designed to amplify human performance, not to replace us completely.

How can GPT-4 be used for websites, SaaS, or applications?

GPT-4 is a complete paradigm shift. It's currently the most advanced language model available and has a wide range of potential use cases. From AI chatbots to expanded web search and content creation, this technology is set to revolutionize the way we interact with AI.

Here are some examples of what it can do:

- Examine images of items in the refrigerator and suggest recipes accordingly.

- Find common themes between two articles by pasting them into the prompt and requesting a summary of the shared concepts.

- Serve as a programming and debugging assistant by prompting the model to write pseudocode, creating code for applications like Discord bots, and correcting errors by pasting error messages into the prompt.

- Describe a screenshot of a browser window in vivid detail, providing information on everything that the model observes.

- Identify what makes an image funny and explain it, which is a traditionally challenging task for AI.

- Code a website based on a hand-drawn, rudimentary layout, with JavaScript and HTML, uploaded as an image prompt, even if the handwriting is barely legible.

- Complete tax-related tasks using tax code and provide reasoning for each step, such as determining someone's standard tax deduction based on their personal details.

- Manage complex language in legal documents, such as document review, legal research memo drafting, deposition preparation, and contract analysis.

Many companies are still in a waitlist to try it, but several have already adopted GPT-4, including:

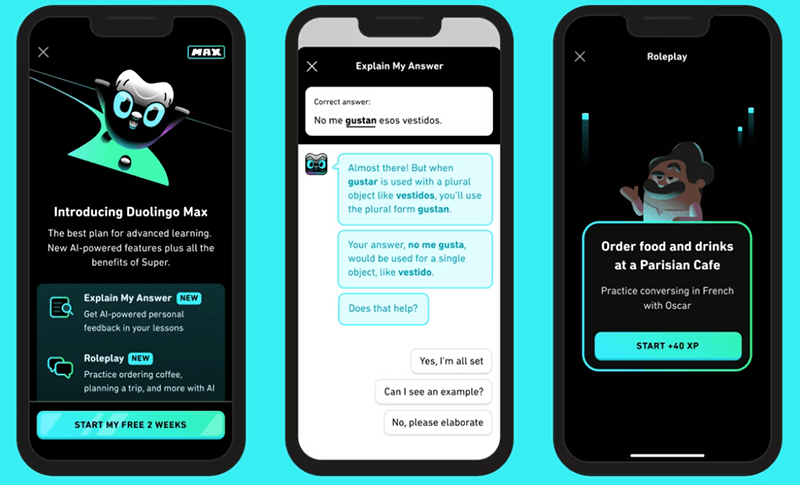

- Duolingo uses AI as a conversation partner for language learners. It also helps explain why users’ answers were wrong.

- Be My Eyes uses the image understanding feature of GPT-4 to assist visually impaired people with everyday tasks, e.g. navigating on locations or identifying and describing products to its users.

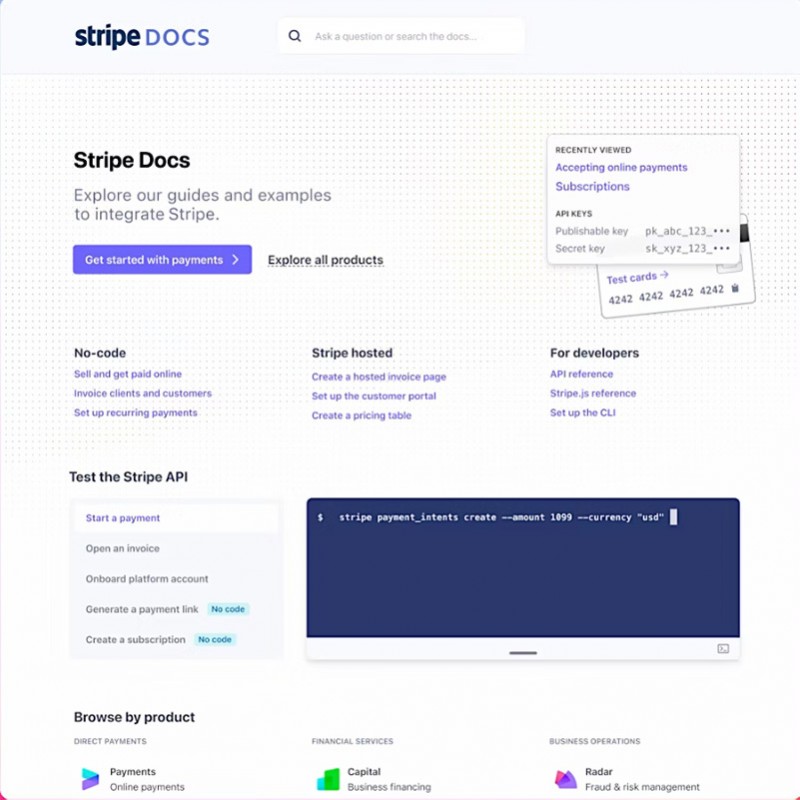

- Stripe uses GPT-4 for several tasks. It improves user experience by analyzing companies’ websites in matters of how they use the platform. It helps fight against fraud by identifying harmful activity among users. The model is also used as an AI assistant that can answer users’ questions about Stripe documentation.

- Morgan Stanley has created an AI-powered chatbot to search their database and help personnel effectively use the company's knowledge.

- Khan Academy uses the model in Khanmigo AI assistant. It can educate students by engaging in deep tutoring-like conversations with them. It also can be a classroom assistant for teachers, preparing materials for classwork.

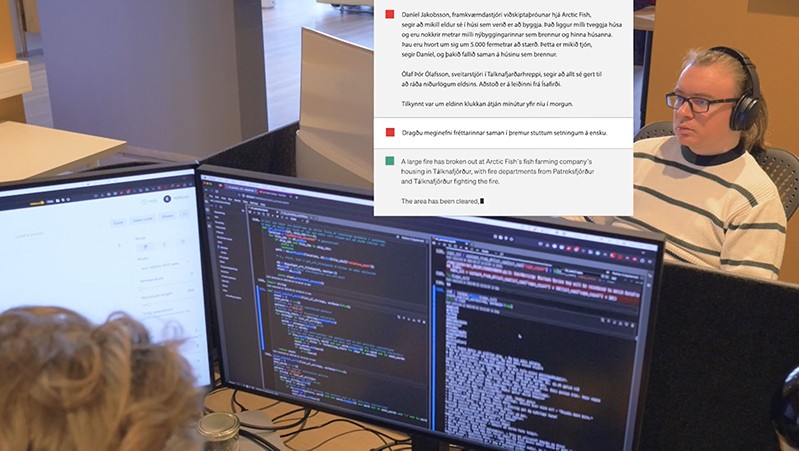

- The Iceland Government uses this technology to preserve their language. A specialized team of the Language Planning Department is occupied with teaching the model Icelandic so local companies are able to use GPT-4 in the national language rather than English.

- Microsoft adopted GPT-4 in 2023 as its main investor and implemented it in Bing’s AI chatbot. New Bing is aimed to be a competitor to Google search engine. Bing AI chatbot is also added to the Edge browser's sidebar. Microsoft also integrated the model in the Office suite to boost users’ productivity.

- ChatGPT Plus is a paid version of ChatGPT powered by GPT-4. It has a higher accuracy, fresher data, better security, and image analysis.

- Reid Hoffman used the technology as a co-writer for his book “Impromptu: Amplifying Our Humanity through AI”.

- Ambiance Health uses GPT-4 to create medical records from conversations between healthcare providers and patients. The aim is to reduce the burden on physicians by eliminating tedious aspects of their work, such as inputting data.

- Jackson Fall used it as a business assistant who helped him establish a profitable business with a budget of only $100. Though this case was rather intended for entertainment, Fall earned over $1,300 with the help of AI.

How to build products with GPT-4?

GPT-4 API access

At first, OpenAI offered API access to developers who have submitted exceptional evaluations of the model to OpenAI Evals. This approach allowed them to gain insights into how the model can be improved for the benefit of everyone. But with time, the model is becoming more available to general public and recently OpenAI has given access to GPT-4-Turbo preview.

There are 2 options of the model - 8K and 32K engines which vary in their memory capability. Requests for them are being processed at different rates and the access may be granted at different times. Additionally, researchers examining the societal implications of AI or AI alignment matters may request subsidized access to the model through their Researcher Access Program.

The API for 8K model is already available throught OpenAI website. The Turbo model with 128K context is available as a preview.

Fine-tuning is also not available yet, but most likely, it will be announced soon.

GPT-4 Integration

Despite limited access OpenAI has a documentation for beta API in which it explains how to use GPT-4. Here is a basic description of the workflow for integrating it into your product or website:

- Use the OpenAI Chat API to call chat-based language models - This means you can use the OpenAI API to build your product with GPT-4. The API will allow you to interact with the language model and get responses to your requests.

- Pass an array of message objects to the model with a role and content for each message - This means you will need to provide the model with a set of messages that it will use to generate a response. Each message should have a role (system, user, or assistant) and content (the text of the message).

- Include conversation history to provide context - This means you should include past messages in the message array to provide context for the model. This helps the model understand the meaning behind the current message and generate a more accurate response.

- Extract the assistant's reply from the API response - This means you will need to take the response from the API and extract the message generated by the model. You can do this by looking at the 'choices' field in the response and extracting the message content.

- Keep the total number of tokens in the messages input and output below the model's limit - Tokens are units of text that the model uses to generate responses. Each model has a limit on the number of tokens it can process. You need to make sure that the total number of tokens in the messages input and output does not exceed the model's limit.

- Experiment with options like temperature and max tokens to influence the output of the model - You can use options like temperature and max tokens to influence the output of the model. Temperature affects how random the output is, while max tokens limits the length of the output. You can experiment with different values for these options to get the desired output.

- Improve the model's output by making instructions clear and trying different approaches - You can improve the model's output by providing clear instructions and trying different approaches. This means that you should refine your request to make it as clear and specific as possible. You can also try different approaches, such as asking the model to think step by step or debate pros and cons before settling on an answer.

How much does it cost to use GPT-4?

The API prices are still not final and might change over time. But the existing plans already show that this model is more expensive than GPT-3. There are 2 options which vary in their capabilities.

- The cost for models gpt-4 and gpt-4-0314 that have 8,000 tokens context lengths (25,000 words) is $0.03 per 1,000 prompt tokens and $0.06 per 1,000 sampled tokens. Recently, OpenAI has opened the access for this option's API to a wider public.

- The cost for models gpt-4-32k and gpt-4-32k-0314 that have 32,000 tokens context lengths (64,000 words) is $0.06 per 1,000 prompt tokens and $0.12 per 1,000 sampled tokens. This option has a limited access.

This makes this model the most expensive among all GPT products.

However, the newest GPT-4-Turbo model offers much lower prive for advanced functionality. For now, this is $0.01 per 1K input tokens and $0.03 per 1K output tokens.

Is there anything better than GPT-4?

Here are a few alternatives with similar capabilities:

- Bloom is an autoregressive Large Language Model that is capable of generating coherent text in 46 languages and 13 programming languages. This is achieved by training the model on vast amounts of text data using industrial-scale computational resources. Additionally, Bloom can be trained to perform text tasks it has not explicitly been trained for by casting them as text generation tasks.

- BERT is a large language model that is pre-trained on a large source of text, such as Wikipedia, to create language representations. These pre-training results can then be applied to other Natural Language Processing (NLP) tasks like question answering and sentiment analysis. BERT and AI Platform Training can be used to train various NLP models in just 30 minutes.

- LLaMA (Large Language Model Meta AI) is a foundational large language model that helps researchers advance their work in AI subfields. This model enables researchers who do not have access to large infrastructure to study large language models, thus democratizing access in this important and rapidly-changing field.

Here are smaller in size alternatives for specific goals:

- Alpaca is a model developed by Stanford University by fine-tuning the LLaMA 7 billion model using Hugging Face's training framework with 52,000 demonstrations of instructions. In a preliminary evaluation, Alpaca performed similarly to OpenAI's text-davinci-003 model in following single-turn instructions, but it is much smaller and less expensive to reproduce. Note that Alpaca is only meant for academic research and cannot be used for commercial purposes.

- ChatGLM-6B is a language model with 6.2 billion parameters that can be used for Chinese QA and dialogue. It can be deployed locally on consumer-grade graphics cards and was trained on 1T tokens of Chinese and English corpus.

- Open Assistant is a supervised-fine-tuning (SFT) model based on Pythia 12 billion, fine-tuned on 22,000 human demonstrations of assistant conversations collected through the Open-Assistant human feedback web app before March 7, 2023.

- BELLE-7B-2M is based on Bloomz-7b1-mt and fine-tuned on 2 million Chinese data and 50,000 pieces of English data from the open source Stanford-Alpaca. It has good Chinese instruction understanding and response generation capabilities.

- Alpaca-LoRA is a 7-billion-parameter LLaMA model fine-tuned to follow instructions. It is trained on the Stanford Alpaca dataset and uses the Huggingface LLaMA implementation.

Conclusion

The release of GPT-4 by OpenAI is an important chapter in the development of language models. Its massive size and multimodal capabilities offer exciting opportunities for a wide range of applications, from creating content to being a powerful AI assistant.

As with any new technology, there are still many risks and challenges, but the benefits of GPT-4 are enormous. However, to integrate GPT-4 in your business you need to wait until the API is available for everyone.

If you are interested in integrating language models to your business, we are happy to consult you.

Ready to leverage GPT-4 for your business?

Contact UsRate this article!

Not rated

Sign in with Google

Sign in with Google

Comments (0)