"Get ready to be amazed! Introducing the revolutionary AI language model, GPT-3. GPT-3, which stands for Generative Pre-trained Transformer, is set to revolutionize the world of Artificial Intelligence. For everything from writing articles, composing poetry, and even coding, this AI powerhouse can do it all." - says the technology about itself.

Indeed, GPT-3 is a wonder! It’s worth having a look at it and even using it for your business. Let’s find out how to make it work for you.

What is GPT-3?

GPT-3 is a language model developed by OpenAI. It's a state-of-the-art natural language processing (NLP) model trained on a massive dataset, and capable of generating human-like text, translation, answers‒you name it. To put it simply, GPT-3 is a technology that can create anything with language structure - from summarizing a text to writing code for an app. GPT-3 is one of the largest and most powerful language models ever, with over 175 billion parameters.

What language is GPT-3 coded in?

GPT-3 is coded in Python which is used widely for artificial intelligence and machine learning. It also uses TensorFlow, an open-source software library for machine learning to train and fine-tune the model using massive amounts of data.

But you are not limited to using only this technology stack. With the help of the API provided by OpenAI, GPT-3 can also be integrated into various platforms and applications using different programming languages, such as Python, PHP, JavaScript, making it easy for developers to add GPT-3 to their own projects.

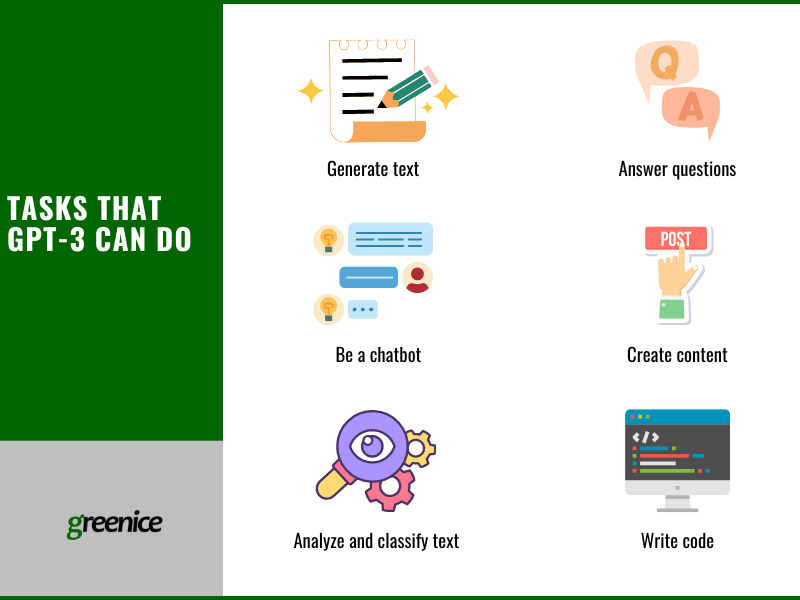

What tasks can GPT-3 do?

GPT-3 can perform a wide range of NLP tasks, including:

- Text generation: GPT-3 can generate text based on a prompt, so it can be used for such tasks as text completion, summarization, and translation.

- Question answering: GPT-3 can answer questions based on its training data, making it useful for tasks such as customer support or knowledge base management.

- Sentiment analysis: GPT-3 can classify the tone of a piece of text as positive, negative, or neutral, making it useful for impact analysis or brand monitoring.

- Chatbots: GPT-3 can be integrated into chatbots to provide human-like conversational experiences for customer support, e-commerce, and other applications.

- Text classification: GPT-3 can be fine-tuned to classify text, so it can be used for spam detection or categorization of news articles.

- Content creation: GPT-3 can be used to generate articles, blog posts, or product descriptions, making it useful for content marketing or SEO.

- Code writing: GPT-3 can also work with programming languages including Python or JavaScript. Thus, it can assist in the development of websites and apps.

Why is GPT-3 so good?

GPT-3 is considered one of the best language models for several reasons:

- Large training dataset: GPT-3 was trained on a massive amount of data, allowing it to understand a wide range of topics and generate text that is more human-like.

- Advanced architecture: GPT-3 uses transformer-based architecture, which is well-suited to NLP tasks and has achieved state-of-the-art results in many benchmark tests.

- Unsupervised learning: Unlike previous language models that required large amounts of labeled data, GPT-3 was trained using unsupervised learning, meaning it was trained on vast amounts of text data without explicit annotations or labels.

- Generative capability: GPT-3 can generate text from a prompt, allowing it to perform tasks such as text completion, summarization, and translation, which are useful for a variety of applications.

- Fine-tuning: GPT-3 can be fine-tuned for specific tasks so it can be used in specific domains and applications, such as customer support or content creation.

- Prompting: Prompt programming enables users to engage with GPT-3 in a novel way, unlike previous models. When prompted to learn a new task, GPT-3 does not alter its weights, but the input text (the prompt) is transformed into sophisticated abstractions that can perform tasks beyond the capabilities of the baseline model. The prompt modifies GPT-3 each time, turning it into an expert on the specific task presented to it.

Few-shot setting, meta-learning, and prompt programming make GPT-3 one of the most capable and flexible language models available, and its capabilities have drawn significant attention from researchers and businesses alike.

What sets GPT-3 apart from other language models?

GPT-3 has many unique features that make it a standout among language models and have led to significant interest from researchers and industries that have an eye on NLP. Here is what makes GPT-3 different:

- Scale: Currently, GPT-3 is one of the largest artificial neural networks. It uses 175 billion weights for each query. This is ten times more than the Nvidia model. This large size allows it to perform well on a wide range of NLP tasks.

- Transfer learning: GPT-3 has been trained in a transfer learning fashion, meaning it can be fine-tuned on tasks with task-specific data, leading to fabulous performance.

- Unsupervised learning: GPT-3 is trained on a massive amount of unstructured text data, making it capable of generating high-quality, error-free text and producing nearly native translations and summaries with little or no fine-tuning.

- Human-like text generation: GPT-3 is capable of generating human-like text, making it useful for chatbots, content creation, and question-answering.

- Generalization: GPT-3 has demonstrated a remarkable ability to make generalizations about new and unseen data based on previous data, making it well suited for real-world applications.

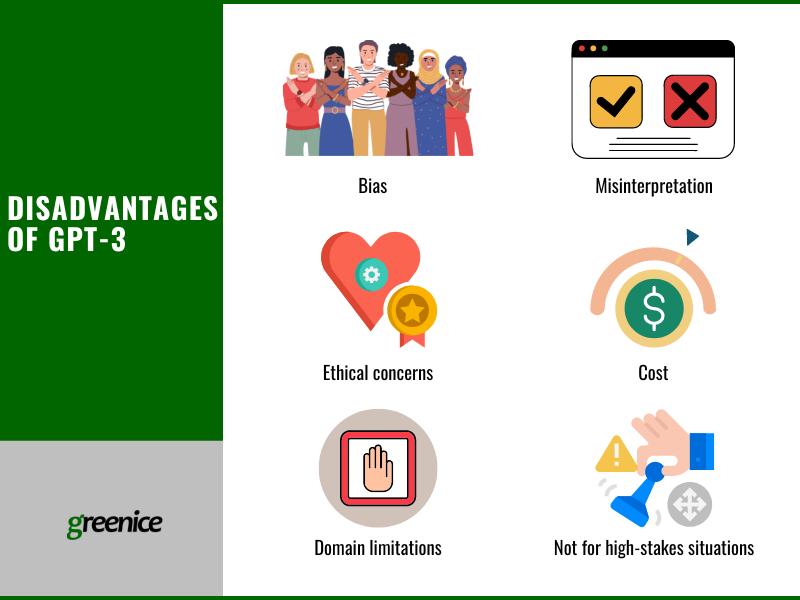

What are the main disadvantages of GPT-3?

Although GPT-3 is generally well-received and it taking the world by storm, it does have some limitations and challenges:

- Bias: Like all AI models, GPT-3 has been trained on the text data available on the internet, which can contain biases and misinformation - about gender, race, or religion for example. This means that the output it generates can also reflect those biases and errors.

- Misinterpretation: GPT-3 can generate text that is difficult to interpret or understand, particularly when it is generating text in response to ambiguous or unclear prompts.

- Ethical concerns: The sheer scale of GPT-3's capabilities and the potential for it to be used for malicious purposes raises ethical concerns. For example, GPT-3 could be used to generate fake news or manipulate public opinion. It easily wrote an article for a blogger, and only a few readers realized that it was not written by a human. It also can produce potentially dangerous information if tricked with a prompt (e.g. telling how to make Molotov cocktails). Another problem is that GPT-3 can be used for academic cheating, or website hacking.

- Cost: You need to pay to access the GPT-3 API which may not be an option for some businesses.

- Limitations to specific domains: GPT-3 has been trained on a wide range of text data, but it may not have the specialized knowledge demanded by certain domains such as medicine or law.

- Not suitable for high-stakes situations: GPT-3 can give potentially dangerous advice. As long as we cannot be sure if the answer is right, we can’t rely solely on GPT-3 when it's a matter of life and death.

OpenAI and the research community are actively working to address these challenges. As for users, the better they write prompts, the better results they receive. Having additional information helps GPT-3 reduce the number of mistakes.

How can GPT-3 be used for websites, SaaS, or applications?

GPT’s advanced NLP capabilities and its ability to generate human-like text, make it a versatile tool that can be applied to a wide range of use cases in different domains.

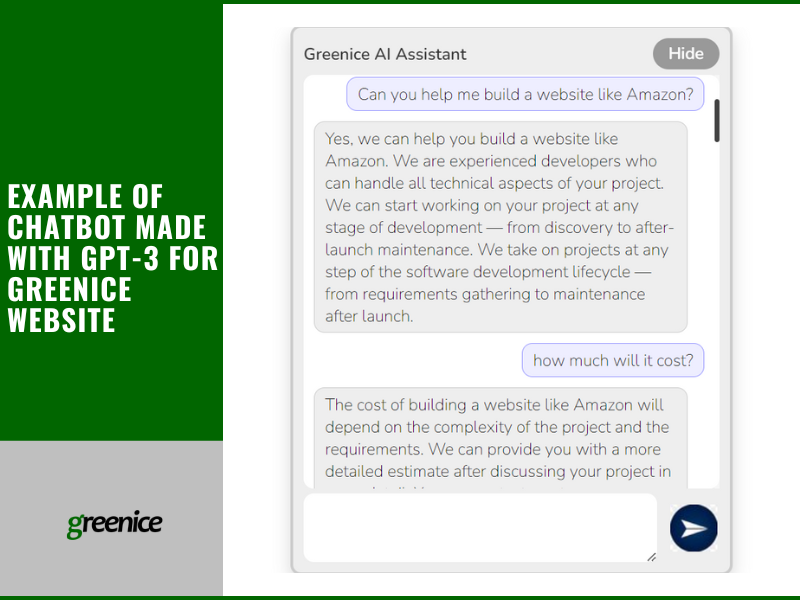

Websites

GPT-3 can be used by websites in several ways. Some of the most common are:

- generating content like articles, blog posts, and product descriptions.

- integrating it as an AI chatbot.

- enhancing website search capabilities, making it easier for users to find what they're looking for.

- personalizing content and experiences for users based on their preferences, history, behavior, etc.

- automatically translating content on a website, making it accessible to a wider audience.

Another option is to use GPT-3 (ChatGPT particularly) to write website code.

Apps

GPT-3 can be used for apps in many ways. It also can serve different types of business. Here are some examples:

- Microsoft Power Apps - low-code app development platform.

- Nabla - a cloud-based version of GPT-3 for medical advice.

- AI|Writer - creates simulated conversations with real and imaginary celebrities.

- Peppertype.ai - AI-powered instant content generator.

- ABtesting.ai - offers automated text suggestions for A/B testing, powered by GPT-3, for headlines, copy, and calls to action.

- Viable - offers customer feedback to businesses.

- Fable Studio - creates virtual reality characters. Algolia - employs GPT-3 for search and discovery. Copysmith - content generation / copywriting.

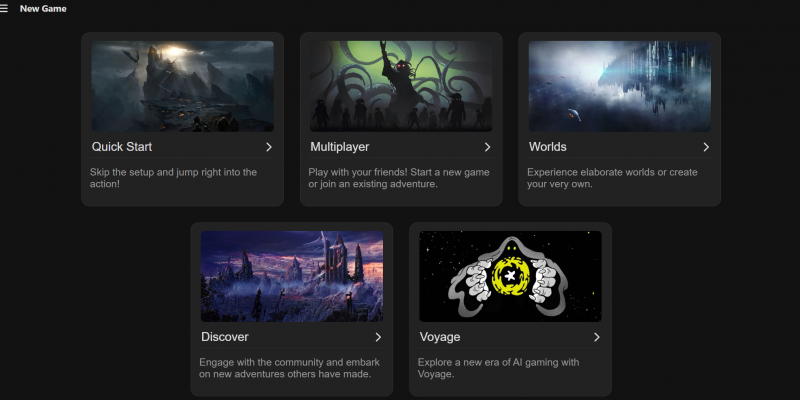

- Latitude - uses technology in an AI Dungeon game to continue the story.

Some people have found even more creative and complicated ways to use GPT-3. For example, creating a "layout generator" that allows GPT-3 to understand instructions in natural language, which the system then converts into JSX code. The same developer, Sharif Shameem, also made Debuild.co, a tool that can make GPT-3 write code for a React app by simply providing a description.

Jordan Singer created a Figma plugin utilizing GPT-3 for design purposes. Shreya Shankar built a demo that translates equations from English to LaTeX. Paras Chopra developed a Q&A search engine that provides answers to questions along with the URL to the source of the answer.

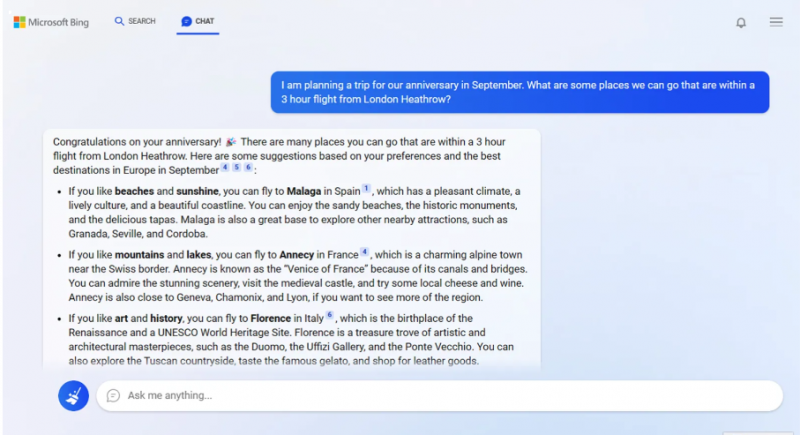

One more example - Microsoft’s Bing developed a chatbot using GPT-3. This technology is expected to improve search mechanisms and improve user satisfaction.

How to build products with GPT-3?

GPT-3 has the potential to revolutionize many industries and applications. With basic technical skills you will be able to build a website or app with GPT-3. There will be 3 stages - integration, choosing a model, training. Integration implies adopting GPT-3 technology by connecting it to your website/app using API. Then, you choose a model out of four options which vary by price and capabilities. Finally, you will need to train the technology to customize it to your business.

1) How to integrate GPT-3 into your product

Here is a step-by-step algorithm for integrating GPT-3 with your website:

- Familiarize yourself with GPT-3: Understand the capabilities and limitations of GPT-3 - read OpenAI's documentation and try out some of the examples to get a better understanding of how GPT-3 works. Note that you should comply with OpenAI API restrictions on the commercial use and limitations on the amount of data you can send to GPT-3.

- Sign up for OpenAI API access: To use GPT-3 in your website, you will need to have access to the OpenAI API. You can sign up for access on the OpenAI website and receive an API key.

- Define the scope of your integration: Think about what kind of functionality you want GPT-3 to provide on your website. This will help you determine the type of data you need to send to GPT-3 and what kind of results you want to receive.

- Choose a model: Decide on a specific GPT-3 model that you want to use for your integration. OpenAI provides several different models, each with its own strengths and weaknesses, so choose the one that best fits your needs.

- Choose a programming language and framework: Decide on a programming language and framework that will be used to integrate GPT-3 with your website. Some popular options include HTML, CSS, and JavaScript for the frontend and Node.js, Ruby on Rails, or Django for the backend.

- Write the code for your integration: Use the programming language and framework to write the code for your integration. This code should include sending API requests to GPT-3 and receiving the results. You can use the results to dynamically generate content for your website.

- Test and debug your integration: Test your integration to make sure it works as expected. Debug any issues that arise and make improvements as needed.

- Deploy your integration: Once you have tested and debugged your integration, you can deploy it to your website so that people can start using it.

These are general steps to integrate GPT-3 with a website. The exact implementation will vary based on the specific requirements of your website and the API client library that you are using.

2) How to choose a language model?

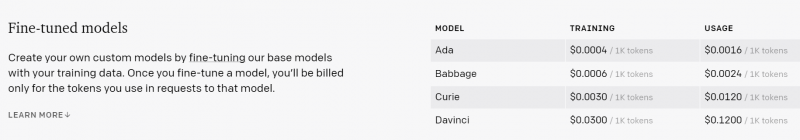

OpenAI offers four versions of the language model: Ada, Babbage, Curie, and Davinci.

Davinci is the most advanced and highest-performing model, but it is also the slowest and most expensive. It is highly creative and complex, and follows instructions better than other options. Ada, on the other hand, is the fastest model but with the lowest performance and least cost. It is suitable for simple tasks. Babbage and Curie fall between the two extremes.

Babbage is very fast, but can deal only with straightforward tasks. Curie is almost as capable as Davinci, but slightly faster and cheaper.

OpenAI's website does not provide in-depth information on the architecture of each model. But there is a comprehensive list of the different versions of GPT-3 in the original GPT-3 research paper. The main difference between the models is in their number of layers and parameters. The models range from 12 layers and 125 million parameters to 96 layers and 175 billion parameters, with more layers and parameters leading to increased learning capacity, but longer processing time and higher costs.

3) How to train GPT-3?

GPT-3 is a language prediction model - it converts textual input into sophisticated output. The model has been pre-trained on a large corpus of text, which allows it to understand the structure and workings of language. The pre-training process required significant computational resources and was estimated to have cost OpenAI $4.6 million.

In order to use GPT-3 for your business, you will need to further train GPT-3 for your specific project. This type of training is called fine-tuning.

We found 2 ways to fine-tune GPT-3:

- providing “pairs” of information (prompt and completion). There should be at least 100-200 such pairs. In this case, technology learns how to autocomplete sentences. For example, “What’s the name of your company? - The company is called X”

- providing technology with company-specific information (using NLP perhaps). This way, technology has a “hint” in every prompt. So it breaks down the text of the query and uses core phrases to find similar sentences from the library you created. Then, based on the hint, GPT-3 paraphrases the sentence and answers the query. Such a library needs to be filled manually.

Training GPT-3 requires some experience with machine learning and natural language processing. If you are not familiar with these topics, you may want to consider working with a machine learning engineer or data scientist who has experience with GPT-3 and training machine learning models.

Need help with integration GPT-3?

Contact UsHow much does it cost to use GPT-3?

OpenAI's pricing for GPT-3 is determined by the language model chosen, with each model priced per 1,000 tokens generated, which is equivalent to approximately 750 English words. There are four different options available, each with its own unique features, and the cost is based on the actual usage (pay-per-use).

When deciding which model to use, there is a balancing act between the speed and quality of the outputs. Ada is the fastest model among the four, but Davinci, being the premium option, is expected to generate higher quality and more elaborate responses to prompts. At the moment, the price for 1,000 tokens for each model is:

- Ada - $0.0004 for training, $0.0016 for usage

- Babbage - $0.0006 for training, $0.0024 for usage

- Curie - $0.0030 for training, $0.0120 for usage

- Davinci - $0.0300 for training, $0.1200 for usage

Basically, the more you train GPT-3 and the more text it produces, the more you pay.

Is there anything better than GPT-3?

GPT-3 is one of the most advanced language models available, and it is currently considered to be the state-of-the-art in NLP. However, the field of NLP and AI is rapidly evolving, and new models and technologies are being developed all the time. Some new models might outperform GPT-3 in certain areas ‒ especially, if they are designed for specific tasks or domains, such as medical or legal NLP.

While GPT-3 is an incredibly powerful tool, there are still options that might suit you better. For example, GPT-3 uses its original knowledge base from 2021. Models launched after that time have more up-to-date data. Or, your business might need a simple bot that doesn’t need such complicated (and expensive) AI technology.

Will there be a gpt4?

GPT-4 is already available via API on OpenAI website.

What are some alternatives to GPT-3?

Here are a few examples of other AI-based options:

- AlphaZero - single task deep learning system

- Bloom - big open-source multilingual model

- MUM - multimodal and multitasking language model

- LaMDA - model for creating powerful chatbots

- Wu Dao 2.0 - multimodal AI for generating text and creating images

- FLAN-T5 - open-source fine-tuned language model

- DALL-E - OpenAI technology for creating pictures from text

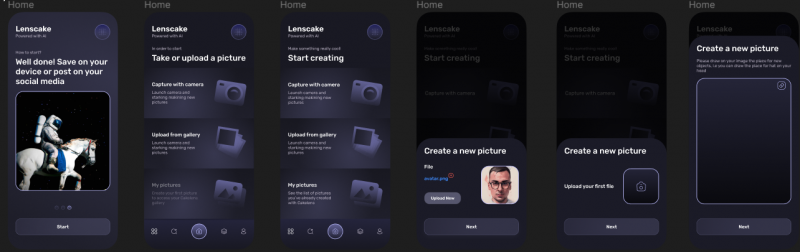

If you are interested in DALL-E we are working on a ReactNative mobile app with DALL-E AI art generator, take a look:

There are many more technologies out there. They vary depending on complexity, features, price and other parameters. You will definitely find something that suits your business.

Conclusion

GPT-3 is a remarkable advancement in the field of AI and language processing. Its ability to generate human-like text, complete tasks, and perform language-related activities with remarkable accuracy is testament to the progress made in this field. However, it's crucial to understand that AI is still in its infancy and that GPT-3 is just a stepping stone on the journey toward creating truly intelligent machines.

Nevertheless, the potential of GPT-3 and the impact it will have on various industries is immense. We expect to see more and more innovative applications of this technology in the near future. It's an exciting time to be a part of this rapidly growing field, and we can't wait to see what the future holds for AI and GPT-3.

If you can’t wait to integrate this technology into your business we are here to help!

Want to build a product with GPT-3?

Contact UsRate this article!

Not rated

Sign in with Google

Sign in with Google

Comments (0)